TL;DR:

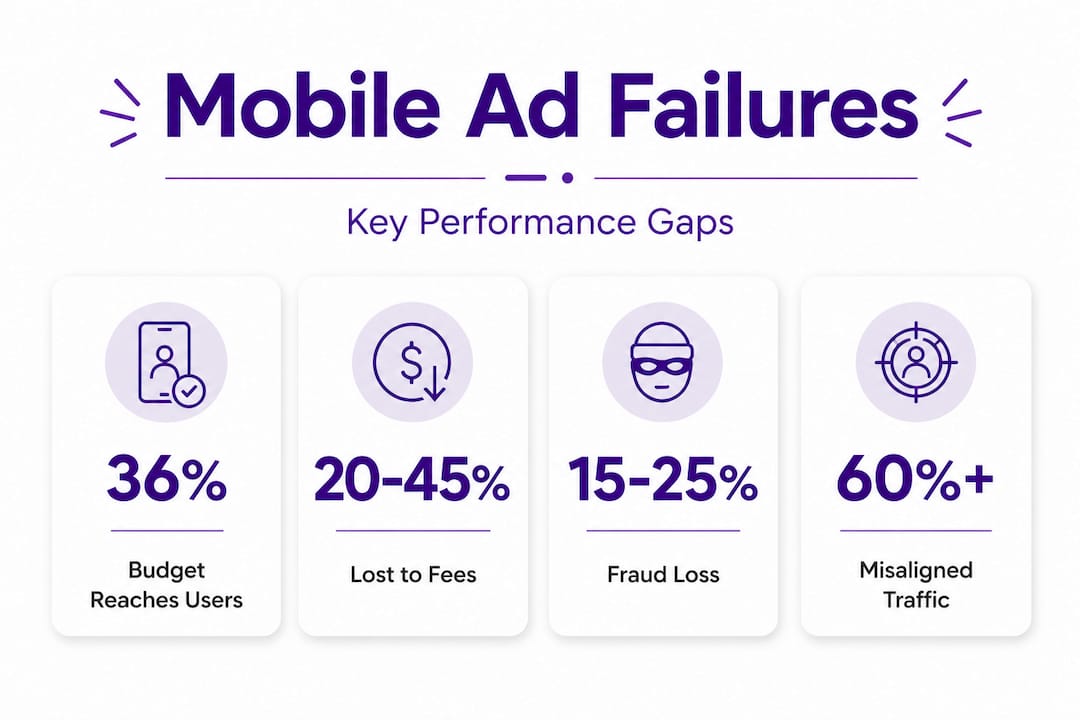

- Only 36% of mobile ad spend effectively reaches real users due to programmatic waste and fraud. Structural inefficiencies and misalignment in creative and landing experiences cause campaign underperformance. Rapid testing, team alignment, and supply chain auditing are key to improving results.

Mobile advertising budgets are growing every year, yet a striking proportion of campaigns return disappointing results. Research confirms that only 36% of ad spend effectively reaches real users, with the remainder swallowed by fees, fraud, and low-quality inventory. For marketing managers and user acquisition specialists running playable ad campaigns, this figure is not an abstract statistic. It is a direct explanation for why click-through rates can look promising while return on investment remains stubbornly low. This article maps out the specific failure points you need to understand and provides clear, structured frameworks to reverse them.

Table of Contents

- The real reasons mobile ads underperform

- Programmatic pitfalls: where your ad budget really goes

- Creative and landing page misalignments: the conversion killer

- UA team challenges: silos, slow testing and skewed priorities

- Proven frameworks for fixing failed mobile ad campaigns

- Why most advice on mobile ad failure misses the point

- Take your playable ads to the next level

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Budget leakage | Most mobile ad spend is lost before reaching users due to ecosystem inefficiencies. |

| Creative misalignment | Ads that don’t match the landing experience cause low conversion even with high click-throughs. |

| Team and testing speed | Rapid, cross-functional creative testing directly affects playable ad ROI and UA growth. |

| Framework-driven fixes | Combining process realignment, fast prototyping and unified KPIs can rescue failing campaigns. |

The real reasons mobile ads underperform

Most post-mortems on failed campaigns focus on creative quality. The visuals were not engaging enough, or the call-to-action was poorly placed. These are legitimate concerns, but they rarely tell the full story. Structural inefficiencies within the mobile advertising ecosystem cause significant damage long before your creative even reaches a human eye.

The core drivers of underperformance include:

- Programmatic waste: Only 36% of spend reaches users after accounting for intermediary fees, ad fraud, and low-quality placements

- Creative-to-landing misalignment: When the promise made in your playable ad does not match what users encounter after clicking, conversions suffer immediately

- Team silos: Disconnected creative, analytics, and media buying functions produce slow feedback loops and missed optimisation opportunities

- Short-term ROAS fixation: Prioritising this week’s return on ad spend over long-term user quality consistently undermines sustainable growth

Understanding these root causes collectively is important because addressing only one of them rarely moves the needle. Campaigns fail for intersecting reasons, and recovery requires targeting several failure points at once.

“The most expensive assumption in mobile marketing is that a budget increase will compensate for structural inefficiency. It rarely does. More spend through a broken system simply produces a larger loss.”

Aligning your team around mobile ad best practices from the outset is far more effective than trying to reverse poor structural decisions mid-flight. The earlier these foundations are examined, the less costly the correction becomes.

Programmatic pitfalls: where your ad budget really goes

To understand why so much spend is lost, it helps to trace the journey of a single ad budget through the programmatic ecosystem. An advertiser commits a budget, which then passes through a demand-side platform, one or more supply-side platforms, an ad exchange, and finally a publisher. At each stage, fees are deducted. By the time the ad is served, a significant portion of the original budget has been consumed by the infrastructure itself.

Beyond fees, fraud compounds the problem significantly.

| Budget loss category | Estimated share of wasted spend |

|---|---|

| Intermediary fees (DSP, SSP, exchanges) | 15 to 25% |

| Ad fraud (bots, click farms, spoofing) | 10 to 20% |

| Low-quality or non-viewable placements | 10 to 15% |

| Mismatched audience targeting | 5 to 10% |

These figures illustrate why only 36% of programmatic spend actually reaches genuine consumers. When performance data is built on this distorted foundation, it becomes difficult to make accurate decisions. A campaign that appears to be underperforming may actually have a strong creative, but one that is never being seen by the right people.

Statistic to note: If your effective cost per acquisition looks consistently high despite good creative, consider first auditing where your budget is actually going rather than immediately revising your creative approach.

This matters especially for playable ads, which require genuine user engagement to function correctly. A playable ad viewed by a bot or displayed in a non-viewable position generates no interaction data and no real learning. The creative testing cycle is corrupted from the start.

Pro Tip: Request a detailed transparency report from your programmatic partners that breaks down fees and shows the percentage of verified human impressions. Any partner unwilling to provide this level of detail should be treated with caution.

Investing in high-impact ad creative tips only pays off when the serving environment is clean enough to let that creative perform honestly. Auditing your supply chain and working with verified, fraud-protected inventory is a prerequisite for meaningful creative testing, not an optional extra.

Creative and landing page misalignments: the conversion killer

One of the most consistently underappreciated causes of campaign failure is the gap between what an ad promises and what users actually experience after they engage. This is sometimes called the expectation gap, and it is particularly damaging for playable ads.

A playable ad creates an immersive, interactive impression of what an app or game offers. When the user taps through and encounters an app store page or landing experience that feels disconnected from that interaction, trust evaporates. The conversion that seemed assured by a strong click-through rate simply does not materialise. Research on ad optimisation confirms that strong clicks with weak conversions are frequently caused by creative-to-landing mismatch rather than audience quality problems.

Consider two scenarios side by side:

| Scenario | Click-through rate | Conversion rate | Root cause |

|---|---|---|---|

| Engaging playable, generic app store landing | High | Very low | Expectation gap |

| Engaging playable, tailored landing page | High | High | End-to-end alignment |

| Weak playable, tailored landing page | Low | Moderate | Creative quality issue |

| Weak playable, generic app store landing | Very low | Very low | Both problems present |

The lesson here is that creative quality and destination quality must be improved together, not in isolation. Many teams optimise their playable creative without ever questioning whether the post-click experience reinforces the same message, visual language, and promise.

Ways to diagnose and fix misalignment include:

- Review the user journey as a whole: Run the full path yourself, from first impression through to install or registration, and note every moment where friction or confusion appears

- Match visual language: Colours, character styles, and gameplay elements shown in the playable should appear consistently on the landing page or app store listing

- Align the value proposition: Whatever benefit the playable communicates should be reinforced, not contradicted or replaced, in the landing experience

- Test iteratively: Use creative testing methods to compare matched versus unmatched creative-landing combinations directly

Pro Tip: A simple way to identify expectation gaps is to ask a colleague unfamiliar with the campaign to describe what they expect after interacting with your playable, before they actually click through. Comparing their expectation to the actual landing experience often reveals blind spots that internal teams have stopped noticing.

Understanding why testing creatives in UA matters so profoundly comes back to this same principle. Testing in isolation, without examining the full conversion pathway, misses the most impactful variables.

UA team challenges: silos, slow testing and skewed priorities

Even well-funded teams with excellent creatives can fail when the operational structure works against them. User acquisition teams face three interconnected challenges that are each difficult to address and even harder to solve simultaneously.

Here is how these challenges typically manifest:

- Slow creative testing cycles: Leading UA teams test over 30 creatives per week, a volume that most mid-sized teams simply cannot sustain with traditional production workflows

- Departmental silos: When creative, analytics, and buying functions operate separately, feedback from live campaigns reaches the creative team too slowly to inform meaningful iterations

- Short-term ROAS dominance: Focusing on immediate return on ad spend causes teams to abandon campaigns or creatives before they have gathered enough data, and discourages the experimentation needed for long-term gains

The speed issue is particularly acute for playable ads. Unlike static or video formats, playables require development time, technical testing, and iterative refinement. Without a streamlined production process, even small adjustments can take days to implement, review, and relaunch.

Understanding which creative types drive UA performance most effectively can help teams prioritise where to invest their limited production bandwidth. Not every format needs equal resource allocation.

Key structural improvements that address these challenges include:

- Cross-functional stand-ups: Short daily or twice-weekly syncs between creative, analytics, and buying ensure that performance signals translate quickly into creative action

- Tiered creative testing: Rather than producing many full-length playables at once, test simplified or shortened variations first to identify winning mechanics before investing in full production

- Balanced KPIs: Include metrics such as retention rate, lifetime value, and session quality alongside ROAS to prevent the premature elimination of potentially strong performers

The practical case for A/B testing playable ads rests on this last point specifically. Teams that build A/B testing into their standard workflow are far less likely to make expensive decisions based on insufficient data. Following essential A/B testing steps ensures that conclusions drawn from tests are statistically sound rather than directional hunches.

Proven frameworks for fixing failed mobile ad campaigns

Recovery from a failing campaign is achievable, but it requires a structured approach rather than reactive creative changes. The following frameworks address the failure points identified above in a coordinated way.

Framework 1: Align team, tooling, and creative strategy

Before adjusting creative, confirm that your team structure, analytics tooling, and creative strategy are all pursuing the same objective. Misaligned goals between buying and creative produce contradictory signals that obscure what is actually working.

Framework 2: Rapid prototyping and iterative A/B testing

Research consistently shows that high creative volume and rapid ideation cycles are among the strongest predictors of UA success. This does not mean producing low-quality work at speed. It means building systems that allow you to test hypotheses quickly, learn from real user data, and refine confidently.

Key actions include:

- Establish a minimum viable creative (a streamlined version of a playable that tests one mechanic or message at a time) as the default first step

- Document every test clearly with a hypothesis, expected outcome, and results summary, creating an institutional knowledge base

- Use a structured A/B testing approach to isolate variables rather than changing multiple elements simultaneously

Framework 3: Repurpose and scale winning creative

Once a creative variant demonstrates strong performance, the next step is not to retire it and start fresh. Repurposing high-performing assets across formats, platforms, and audience segments extends their value without requiring equivalent production investment. Detailed guidance on repurposing ad creatives helps teams extract maximum value from proven concepts.

Framework 4: Unified KPIs across functions

Bring all team functions under a single set of KPIs that encompass the full user journey. This prevents creative teams from optimising for clicks while buying teams optimise for installs and analytics teams report on retention, each succeeding independently while the campaign as a whole underperforms.

Pro Tip: Create a shared campaign dashboard that every function can access in real time, showing metrics from impression through to long-term retention. When all parties can see the same numbers simultaneously, conversations become more productive and decisions faster.

Why most advice on mobile ad failure misses the point

Most guidance on improving mobile ad campaigns gravitates towards tactics: better creative, smarter targeting, cleaner copy. This advice is not wrong, but it consistently misses something more fundamental. The structure within which those tactics operate often makes success impossible regardless of how well the individual elements are executed.

Consider a team producing genuinely excellent playable ads. The creative is compelling, the mechanic is engaging, and the targeting parameters are well-defined. But if the programmatic supply chain is not audited, a significant portion of impressions are wasted on non-human traffic. If the landing experience has not been updated to reflect the playable’s promise, click-throughs do not convert. And if creative feedback loops take two weeks to close, the iteration speed needed to respond to live data simply is not there.

Incremental improvements to each of these individual problems will move the needle slightly. But real, sustained improvement comes from treating the entire campaign system as an interdependent structure. Changing one component in isolation rarely unlocks breakthrough results.

The uncomfortable truth for many teams is that this level of integration requires investment beyond tools and budgets. It requires cultural change within the organisation. Creative teams need to understand basic analytics. Buying teams need to appreciate creative constraints. Leadership needs to accept that sustainable UA growth cannot be reduced to weekly ROAS targets.

Playable ads specifically demand this evolved approach. They are more complex to produce, more dependent on post-click alignment, and more sensitive to traffic quality than most formats. Teams that treat playables like scaled-up static banners consistently underperform. Those that rethink their entire workflow around the interactive nature of the format see the results that playable advertising genuinely makes possible.

Take your playable ads to the next level

The failure points covered in this article, from programmatic waste and creative misalignment to team silos and slow testing cycles, all share one common thread: they are structural, not creative. At PlayableMaker, we have built our no-code platform specifically to address the structural barriers that prevent teams from iterating quickly and effectively. Understanding what playable ads are and how they work is the first step. Appreciating the psychology behind their effectiveness is the second. The third is having a production process fast enough to act on what you learn. Start building with PlayableMaker and turn your campaign recovery frameworks into measurable, repeatable results without the development overhead.

Frequently asked questions

What is the biggest reason mobile ads fail?

The largest single factor is budget loss due to programmatic inefficiencies and fraud. Research shows that only 36% of spend reaches real consumers after fees, fraud, and poor inventory are accounted for.

Does aligning creative and landing page really matter?

Yes, significantly. Campaigns frequently achieve strong clicks but weak conversions when the ad experience and landing page tell different stories, destroying the trust the creative has built.

How many new creatives should a UA team be testing?

Top-performing user acquisition teams routinely test over 30 creatives per week, a volume that requires streamlined production workflows to sustain without sacrificing quality.

Can rapid testing and iteration solve most playable ad failures?

Frequent iteration and high-volume creative testing substantially improve playable ad performance, particularly when combined with supply chain auditing and creative-to-landing alignment rather than applied in isolation.

Recommended

- How to launch mobile game ads: no-code guide 2026 – playablemaker.com How to launch mobile game ads: no-code guide 2026

- Role of Mobile Ads: Enhancing User Acquisition Results – playablemaker.com Role of Mobile Ads: Enhancing User Acquisition Results

- Mobile ad funnel guide: Optimise playables for better UA – playablemaker.com Mobile ad funnel guide: Optimise playables for better UA

- 5 mobile ad creative types to boost user acquisition – playablemaker.com 5 mobile ad creative types to boost user acquisition